Friday, January 30, 2015

Solutions to exercises of DBDA2E through chapter 18 now available

Exercise Solutions for Chapters 1-18 of DBDA2E are now available at the book's web site.

Monday, January 26, 2015

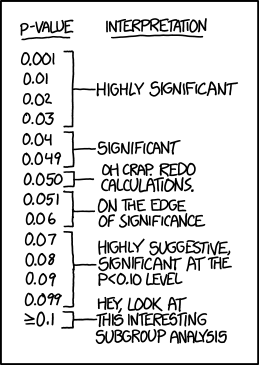

Institutionalized publication thresholds, p values, and XKCD

XKCD today is about p values (see image at right). I think that what XKCD is pointing out is not so much a problem with p values as with

strongly institutionalized publication thresholds and the ritual of mindless statistics, as Gigerenzer would say. The same problem

could arise with strongly institutionalized publication thresholds for

Bayes factors, or even for HDI-and-ROPEs. One thing that's nice about the

HDI-and-ROPE approach is that it's explicitly about magnitude and

uncertainty, to help nudge thinking away from mindless decision

thresholds.

(Thank you to Kevin J. McCann for pointing me to XKCD today.)

P.S. added 30-January-2015: Gigerenzer has a new article, extending the one linked above to Bayes factors. Links: http://jom.sagepub.com/content/41/2/421 and http://jom.sagepub.com/content/41/2/421.full.pdf+html Surrogate Science: The Idol of a Universal Method for Scientific Inference. Gerd Gigerenzer and Julian N. Marewski. Journal of Management, Vol. 41, No. 2, February 2015, 421–440.

(Thank you to Kevin J. McCann for pointing me to XKCD today.)

P.S. added 30-January-2015: Gigerenzer has a new article, extending the one linked above to Bayes factors. Links: http://jom.sagepub.com/content/41/2/421 and http://jom.sagepub.com/content/41/2/421.full.pdf+html Surrogate Science: The Idol of a Universal Method for Scientific Inference. Gerd Gigerenzer and Julian N. Marewski. Journal of Management, Vol. 41, No. 2, February 2015, 421–440.

In this article, we make three points.

1. There is no universal method of scientific inference but, rather, a toolbox of useful statistical methods. In the absence of a universal method, its followers worship surrogate idols, such as significant p values. The inevitable gap between the ideal and its surrogate is bridged with delusions—for instance, that a p value of 1% indicates a 99% chance of replication. These mistaken beliefs do much harm: among others, by promoting irreproducible results.

2. If the proclaimed “Bayesian revolution” were to take place, the danger is that the idol of a universal method might survive in a new guise, proclaiming that all uncertainty can be reduced to subjective probabilities. And the automatic calculation of significance levels could be revived by similar routines for Bayes factors. That would turn the revolution into a re-volution— back to square one.

These first two points are not “philosophical” but have very practical consequences, because

3. Statistical methods are not simply applied to a discipline; they change the discipline itself, and vice versa. In the social sciences, statistical tools have changed the nature of research, making inference its major concern and degrading replication, the minimization of measurement error, and other core values to secondary importance.

Friday, January 16, 2015

Free introduction to doing Bayesian data analysis - Share with friends!

The goal of Chapter 2 is to introduce the conceptual framework of Bayesian data analysis. Bayesian data analysis has two foundational ideas. The first idea is that Bayesian inference is reallocation of credibility across possibilities. The second foundational idea is that the possibilities, over which we allocate credibility, are parameter values in meaningful mathematical models. These two fundamental ideas form the conceptual foundation for every analysis in this book. Simple examples of these ideas are presented in this chapter. The rest of the book merely fills in the mathematical and computational details for specific applications of these two ideas. This chapter also explains the basic procedural steps shared by every Bayesian analysis.The chapter is here.

Thursday, January 8, 2015

Link to: Probable Points and Credible Intervals, Decision Theory

See Rasmus Bååth's excellent blog post about Bayesian decision theory here.

Saturday, January 3, 2015

How to specify an informed prior in JAGS/BUGS

An emailer asks about how to specify an informed prior in JAGS/BUGS. Here are some condensed excerpts of the question and my reply. Of course, while the discussion here refers to JAGS/BUGS, the same considerations apply to Stan.

We

do the analogous process for the marginals of the other parameters. For

sigma, we would not use a normal, but instead use perhaps a gamma

because its support is nonnegative and it is skewed like the marginal

posterior on sigma.

So

far, we have addressed only the marginal distributions, and assumed

there is no correlational structure among the parameters in PD1.

Although some models and data might yield posterior distributions with

little correlational structure, this is not usually true. For example,

in linear regression (when using non-standardized data) there is usually

a strong correlation between b0 and b1. As another example, in "robust"

models that use a t distribution to accommodate outliers, there can be a

strong correlation between the scale parameter (sigma) and the

normality (df) parameter. Therefore, a more accurate approximation

should also express the correlations among parameters.

Dear Prof. Kruschke,

I'm [on the faculty at ... university]. My name is [...]. I'm learning Bayesian method by reading your book, [1st edition]. I'm writing to you for asking some questions on determination of the prior for parameters.

I try to use the method in your book to estimate parameters in [an application that involves linear regression on logarithmically scaled data].

lnW=lna+b*lnL is similar to y=beta0+beta1*x. Denote lnW as y, lnL as x, lna as beta0 and b as beta1. I try to use the linear model to estimate parameters.

According to P.347 of your book, it's difficult for sampling algorithms to explore when values of the slope and intercept parameters tend to be tightly correlated. In fact, parameter a and b have negative correlation. So, I try to standardize the data x and y according to the method in your book. I get the model zy=zbeta0+zbeta1*zx. beta0=zbeta0*SDy+My-zbeta1*

SDy*Mx/SDx, beta1=zbeta1*SDy/SDx.

I have some questions on determination of the prior for zbeta0 and zbeta1. In fact, I know the prior of parameter a and b. For example, a~dunif(0.0001, 0.273), b~dunif(1.96,3.94). Can I use the next code to determine the prior for zeta0 and zeta1?

zbeta1<-b*SDx/SDy

zbeta0<-(zbeta1*SDy*Mx/SDx-My+lna)/SDy

b~dunif(1.96, 3.94)

a~dunif(0.0001,0.273)

Today I read chapter 23 of the book. I learned the method about reparameterization of probability distribution on P.516 in sector 23.4. I am confused if I need to use the method on P.516 to transform the prior of parameter a and b to the prior of parameter zbeta0 and zbeta1.

Would you like to give me some instruction about these questions?

Thanks for your consideration.

With best wishes for Christmas and New Year.

My reply:

Dear Dr. [...]:

Thank you for your message and for your interest in the book.

Please accept my apologies for the delay in my reply. [See https://sites.google.com/site/doingbayesiandataanalysis/contact]

Your questions do not have quick answers, but at least here are some ideas.

There

are different aspects involved in your application. First, there is the

issue of how to specify a prior based on previous data. Second, there

is the issue of reparameterizing the prior when the data scales are

transformed. I will focus on the first issue.

Suppose we

are doing simple linear regression, with parameters b0 (intercept), b1

(slope), and sigma (standard deviation of normally distributed noise).

Suppose that previous research provided data set D1, and that we do

Bayesian estimation of parameters starting with a vague prior, yielding

posterior distribution PD1 = p(b0,b1,sigma|D1). This distribution, PD1,

should be the prior for subsequent data.

We would like to

express PD1 in JAGS/BUGS. Unfortunately, in general, this cannot be done

exactly. There may be some models for which the posterior takes a

simple mathematical form that can be exactly expressed in JAGS/BUGS, but

in general the posterior is a complicated distribution, which is why we

use MCMC methods in the first place! Therefore we must express PD1 only

approximately.

One very rough approach would be to

consider only the marginal distributions of the individual parameters in

PD1, and approximate each marginal distribution with some reasonable

built in distribution in JAGS/BUGS. For example, consider b0. The MCMC

sample of PD1 has provided several thousand representative credible

values of b0, and a histogram of the marginal distribution of b0

suggests that a normal distribution might be a reasonable approximation

(of the marginal distribution of b0). So we find the mean, mub0, and

standard deviation, sb0, of b0 in the MCMC sample of PD1, and specify

the prior in JAGS as

b0 ~ dnorm( mub0 , 1/sb0^2 )

Notice that the expression of the

prior should not, typically, be uniform on a limited range. All that

would do is cut off the range of the subsequent posterior. If the data

"want" parameter values near the limit of the range, then the posterior

would pile up against the limit, like a heap of mulch against a fence.

More fundamentally, a uniform distribution does not reasonably approximate the posterior from any conceivable previous data (assuming the "protoprior" for the initial data is vague).

One

way to express correlations among parameters is to approximate their

joint distribution by a multivariate normal. For example, in linear

regression, we might consider the joint distribution of b0 and b1 in the

MCMC sample of PD1, and approximate it with a bivariate normal. The

bivariate normal has a mean vector with 2 means, and a covariance matrix

with 3 distinct components (i.e., the variance of b0, the variance of

b1, and the covariance of b0 and b1). With those 5 values estimated from

the MCMC sample of PD1, we would create a prior in JAGS by putting b0

and b1 in a vector, and specifying a prior on the vector as a

multivariate normal with the means and covariance matrix obtained from

the prior MCMC sample.

As more parameters are involved, it

becomes difficult to write down all the considerations of constructing a

good mathematical approximation to a high-dimensional MCMC

distribution. You have to take a good look at the specific prior you are

trying to emulate, and make a good argument that you have captured its

essentials.

There is a way to bypass all of the worries

about approximating a previous MCMC sample. Instead of using the

posterior from the previous data, just combine the previous data with

your new data, and do the analysis on the combined data. This will give

you an exact answer, but at the cost of running on a large data set (and

requiring all the previous data).

Hope this helps. Thanks again for your interest.

John

Friday, January 2, 2015

Hard cover print book of DBDA2E back in stock, with 30% discount

Subscribe to:

Posts (Atom)